-

About

Our Story

back- Our Mission

- Our Leadershio

- Accessibility

- Careers

- Diversity, Equity, Inclusion

- Learning Science

- Sustainability

Our Solutions

back

-

Community

Community

back- Newsroom

- Webinars on Demand

- Digital Community

- The Institute at Macmillan Learning

- English Community

- Psychology Community

- History Community

- Communication Community

- College Success Community

- Economics Community

- Institutional Solutions Community

- Nutrition Community

- Lab Solutions Community

- STEM Community

- Newsroom

- Macmillan Community

- :

- Psychology Community

- :

- Talk Psych Blog

- :

- Who Will Win the U.S. Presidency? Betting Markets ...

Who Will Win the U.S. Presidency? Betting Markets vs. Prediction Models

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

I see you. My psychic powers enable me, from a great distance, to peer into your heart and to sense your unease. Regardless of your political leanings, you understand the upcoming U.S. election to be momentous, world-changing, the most important of your lifetime. Part of you is hopeful, but a larger part of you is feeling tense. Anxious. Fearing a future that would follow the outcome you dread.

Hungering for indications of the likely outcome, you read the latest commentary and devour the latest polls. You may even glean insight from the betting markets (here and here), which offer “the wisdom of the crowd.” They are akin to stock markets, in which people place bets on future stock values, with the current market value—the midpoint between those expecting a stock to rise and those expecting it to fall—representing the distillation of all available information and insight. As stock market booms and busts remind us, the crowd sometimes displays an irrational exuberance or despair. Yet, as Princeton economist Burton Malkiel has repeatedly demonstrated, no individual stock picker (or mutual fund) has had the smarts to consistently outguess the efficient marketplace.

You may also, if you are a political geek, have welcomed clues to the election outcome from prediction models (here and here) that combine historical information, demographics, and poll results to forecast the result.

But this year, the betting and prediction markets differ sharply. The betting markets see a 34 percent chance of a Trump victory, while the prediction models see but a 5 to 10 percent chance. So who should we believe?

Skeptics scoff that the poll-influenced prediction models erred in 2016. FiveThirtyEight’s final election forecast gave Donald Trump only a 28 percent chance of winning. So, was it wrong? Consider a simple prediction model that predicted a baseball player’s chance of a hit based on the player’s batting average. If a .280 hitter came to the plate and got a hit, would we discount our model? Of course not, because we understand the model’s prediction that sometimes (28% of the time, in this case), the less likely outcome will happen. (If it never does, the model errs.)

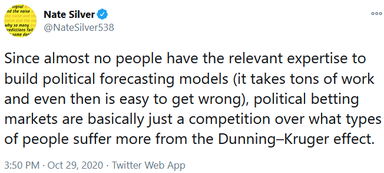

But why do the current betting markets diverge from the prediction models? FiveThirtyEight modeler Nate Silver has an idea:

The Dunning-Kruger effect, as psychology students know, is the repeated finding that incompetence tends not to recognize itself. As one person explained to those unfamiliar with Silver’s allusion:

Others noted that the presidential betting markets, unlike the stock markets, are drawing on limited (once every four years) information—with people betting only small amounts on their hunches, and without the sophisticated appraisal that informs stock investing.

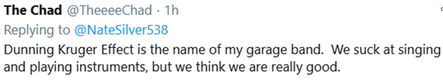

And what are their hunches? Surely, these are informed by the false consensus effect—our tendency to overestimate the extent to which others share our views. Thus, in the University of Michigan’s July Survey of Consumers, 83 percent of Democrats and 84 percent of Republicans predicted that voters would elect their party’s presidential candidate.

Ergo, bettors are surely, to some extent, drawing on their own preferences, which—thanks to the false consensus effect—inform their predictions. What we are, we see in others.

So, if I were a betting person, I would wager based on the prediction models. Usually, there is wisdom to the crowd. But sometimes . . . we shall soon see . . . the crowd is led astray by the whispers of its own inner voices.

-----

P.S. At 10:30 a.m. on election day, the Economist model projects a 78 percent chance of a Biden Florida victory, FiveThirtyEight.com projects a Biden Florida victory with a 2.5 percent vote margin, and electionbettingodds.com betting market average estimates a 62% chance of a Trump Florida victory. Who's right--the models or the bettors? Stay tuned!

P.S.S. on November 4: Mea culpa. I was wrong. Although the models--like weather forecasts estimating the percent change of rain--allow for unlikely possibilities, the wisdom of the betting crowd won this round--both in Florida and in foreseeing a closer-than-expected election.

(For David Myers’ other essays on psychological science and everyday life, visit TalkPsych.com.)

-

Abnormal Psychology

6 -

Achievement

2 -

Affiliation

1 -

Behavior Genetics

1 -

Cognition

7 -

Consciousness

8 -

Current Events

29 -

Development Psychology

14 -

Developmental Psychology

9 -

Drugs

1 -

Emotion

13 -

Gender

1 -

Gender and Sexuality

1 -

Genetics

3 -

History and System of Psychology

3 -

Industrial and Organizational Psychology

2 -

Intelligence

4 -

Learning

3 -

Memory

2 -

Motivation

3 -

Motivation: Hunger

2 -

Nature-Nurture

4 -

Neuroscience

6 -

Personality

10 -

Psychological Disorders and Their Treatment

10 -

Research Methods and Statistics

22 -

Sensation and Perception

10 -

Social Psychology

87 -

Stress and Health

8 -

Teaching and Learning Best Practices

7 -

Thinking and Language

14 -

Virtual Learning

2

- « Previous

- Next »