-

About

Our Story

back- Our Mission

- Our Leadershio

- Accessibility

- Careers

- Diversity, Equity, Inclusion

- Learning Science

- Sustainability

Our Solutions

back

-

Community

Community

back- Newsroom

- Webinars on Demand

- Digital Community

- The Institute at Macmillan Learning

- English Community

- Psychology Community

- History Community

- Communication Community

- College Success Community

- Economics Community

- Institutional Solutions Community

- Nutrition Community

- Lab Solutions Community

- STEM Community

- Newsroom

“How Can They Be So Stupid?”

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

“Listen, we all hoped and prayed the vaccines would be 100 percent effective, 100 percent safe, but they’re not. We now know that fully vaccinated individuals can catch Covid, they can transmit Covid. So what’s the point?” ~U.S. Senator Ron Johnson, Fox News, December 27, 2021

“I have made a ceaseless effort not to ridicule, not to bewail, not to scorn human actions, but to understand them.” ~Baruch Spinoza, Political Treatise, 1677

Many are aghast at the irony: Unvaccinated, unmasked Americans remain much less afraid of the Covid virus than are their vaccinated, masked friends and family.

This, despite two compelling and well-publicized facts:

- Covid has been massively destructive. With 5.4 million confirmed Covid deaths worldwide (and some 19 million excess Covid-era deaths), Covid is a great enemy. In the U.S., the 840,000 confirmed deaths considerably exceed those from all of its horrific 20th-century wars.

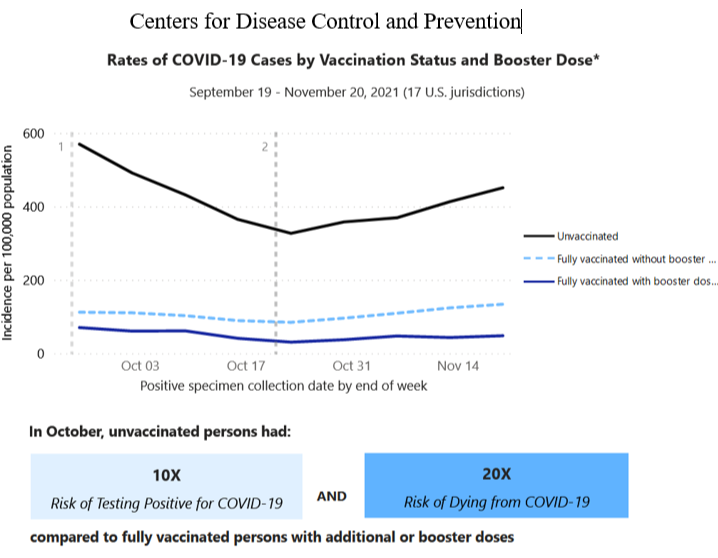

- The Covid vaccines are safe and dramatically effective. The experience of 4.5+ billion people worldwide who have received a Covid vaccine assures us that they entail no significant risks of sickness, infertility, or miscarriage. Moreover, as the CDC illustrates, fully vaccinated and boosted Americans this past fall had a 10 times lower risk of testing positive for Covid and a 20 times lower risk of dying from it.

Given Covid’s virulence, why wouldn’t any reasonable person welcome the vaccine and other non-constraining health-protective measures? How can a U.S. senator scorn protection that is 90+ percent effective? Does he also shun less-than-100%-effective seat belts, birth control, tooth brushing, and the seasonal flu vaccine that his doctor surely recommends?

To say bluntly what so many are wondering: Has Covid become a pandemic of the stupid?

Lest we presume so, psychological science has repeatedly illuminated how even smart people can make not-so-smart judgments. As Daniel Kahneman and others have demonstrated, intelligent people often make dumb decisions. Researcher Keith Stanovich explains: Some biases—such as our favoring evidence that confirms our preexisting views—have “very little relation to intelligence.”

So, if we’re not to regard the resilient anti-vax minority as stupid, what gives?

If, with Spinoza, we wish not to ridicule but to understand, several psychological dynamics can shine light.

Had we all, like Rip Van Winkle, awakened to the clear evidence of Covid’s virulence and the vaccine efficacy, surely we would have more unanimously accepted these stark realities. Alas, today’s science-scorning American subculture seeded skepticism about Covid before the horror was fully upon us. Vaccine suspicion was then sustained by several social psychological phenomena that we all experience. Once people’s initial views were formed, confirmation bias inclined them to seek and welcome belief-confirming information. Motivated reasoning bent their thinking toward justifying what they had come to believe. Aided by social and broadcast media, group polarization further amplified and fortified the shared views of the like-minded. Misinformation begat more misinformation.

Moreover, a powerful fourth phenomenon was at work: belief perseverance.

Researchers Craig Anderson, Mark Lepper, and Lee Ross explored how people, after forming and explaining beliefs, resist changing their minds. In two of social psychology’s great but lesser known experiments, they planted an idea in Stanford undergraduates’ minds. Then they discovered how difficult it was to discredit the idea, once rooted.

Their procedure was simple. Each study first implanted a belief, either by proclaiming it to be true or by offering anecdotal support. One experiment invited students to consider whether people who take risks make good or bad firefighters. Half looked at cases about a risk-prone person who was successful at firefighting and a cautious person who was not. The other half considered cases suggesting that a risk-prone person was less successful at firefighting. Unsurprisingly, the students came to believe what their case anecdotes suggested.

Then the researchers asked all the students to explain their conclusion. Those who had decided that risk-takers make better firefighters explained, for instance, that risk-takers are brave. Those who had decided the opposite explained that cautious people have fewer accidents.

Lastly, Anderson and his colleagues exposed the ruse. They let students in on the truth: The cases were fake news. They were made up for the experiment, with other study participants receiving the opposite information.

With the truth now known, did the students’ minds return to their pre-experiment state? Hardly. After the fake information was discredited, the participants’ self-generated explanations sustained their newly formed beliefs that risk-taking people really do make better (or worse) firefighters.

So, beliefs, once having “grown legs,” will often survive discrediting. As the researchers concluded, “People often cling to their beliefs to a considerably greater extent than is logically or normatively warranted.”

In another clever Stanford experiment, Charles Lord and colleagues engaged students with opposing views of capital punishment. Each side viewed two supposed research findings, one supporting and the other contesting the idea that the death penalty deters crime. So, given the same mixed information, did their views later converge? To the contrary, each side was impressed with the evidence supporting their view and disputed the challenging evidence. The net result: Their disagreement increased. Rather than using evidence to form conclusions, they used their conclusions to assess evidence.

And so it has gone in other studies, when people selectively welcomed belief-supportive evidence about same-sex marriage, climate change, and politics. Ideas persist. Beliefs persevere.

The belief-perseverance findings reprise the classic When Prophecy Fails study led by cognitive dissonance theorist Leon Festinger. Festinger and his team infiltrated a religious cult whose members had left behind jobs, possessions, and family as they gathered to await the world’s end on December 21, 1954, and their rescue via flying saucer. When the prophecy failed, did the cult members abandon their beliefs as utterly without merit? They did not, and instead agreed with their leader’s assertion that their faithfulness “had spread so much light that God had saved the world from destruction.”

These experiments are provocative. They indicate that the more we examine our theories and beliefs and explain how and why they might be true, the more closed we become to challenging information. When we consider and explain why a favorite stock might rise in value, why we prefer a particular political candidate, or why we distrust vaccinations, our suppositions become more resilient. Having formed and repeatedly explained our beliefs, we may become prisoners of our own ideas. Thus, it takes more compelling arguments to change a belief than it does to create it.

Republican representative Adam Kinzinger understands: “I’ve gotten to wonder if there is actually any evidence that would ever change certain people’s minds.” Moreover, the phenomenon cuts both ways, and surely affects the still-fearful vaccinated and boosted people who have hardly adjusted their long-ago Covid fears to the post-vaccine, Omicron new world.

The only known remedy is to “consider the opposite”—to imagine and explain a different result. But unless blessed with better-than-average intellectual humility, as exhibited by most who accept vaccine science, we seldom do so.

Yet there is good news. If employers mandate either becoming vaccinated or getting tested regularly, many employees will choose vaccination. As laboratory studies remind us, and as real-world studies of desegregation and seat belt mandates confirm, our attitudes will then follow our actions. Behaving will become believing.

(For David Myers’ other essays on psychological science and everyday life, visit TalkPsych.com. Follow him on Twitter: @davidgmyers.)

-

Abnormal Psychology

6 -

Achievement

2 -

Affiliation

1 -

Behavior Genetics

1 -

Cognition

7 -

Consciousness

8 -

Current Events

29 -

Development Psychology

14 -

Developmental Psychology

9 -

Drugs

1 -

Emotion

13 -

Gender

1 -

Gender and Sexuality

1 -

Genetics

3 -

History and System of Psychology

3 -

Industrial and Organizational Psychology

2 -

Intelligence

4 -

Learning

3 -

Memory

2 -

Motivation

3 -

Motivation: Hunger

2 -

Nature-Nurture

4 -

Neuroscience

6 -

Personality

10 -

Psychological Disorders and Their Treatment

10 -

Research Methods and Statistics

22 -

Sensation and Perception

10 -

Social Psychology

87 -

Stress and Health

8 -

Teaching and Learning Best Practices

7 -

Thinking and Language

14 -

Virtual Learning

2

- « Previous

- Next »